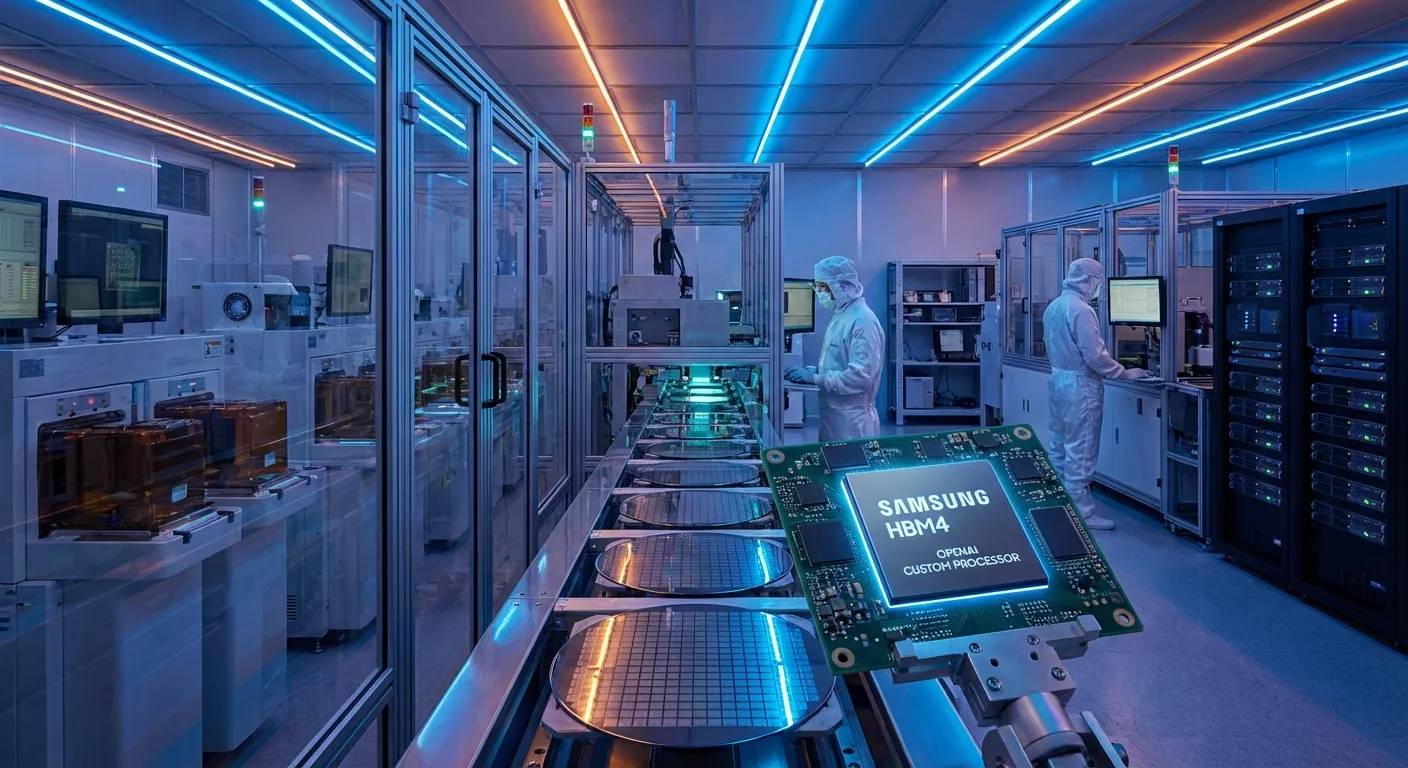

Samsung to Supply HBM4 Chips for OpenAI's First In-House AI Processor

Samsung Electronics has agreed to supply next-generation HBM4 memory chips to OpenAI for use in the ChatGPT maker's first in-house AI processor.

What Does This Mean?

According to the Korean Economic Daily and confirmed by Reuters, Samsung plans to supply OpenAI with HBM4 (High Bandwidth Memory 4) chips – a key component in the new processor OpenAI is developing internally.

Background

OpenAI has long relied on NVIDIA GPUs for its AI training and inference needs. Developing a custom processor is a strategic move toward reducing this dependency and potentially cutting costs dramatically – a trend we see at Google (TPU), Amazon (Trainium/Inferentia), and Microsoft (Maia).

What Is HBM4?

High Bandwidth Memory is purpose-built memory for AI workloads. HBM4 represents the next generation, with significantly higher bandwidth and energy efficiency than today's HBM3E that dominates the market.

Implications for CIOs

This development signals that major AI companies are moving toward vertical integration of hardware – which could over time change the pricing dynamics of AI services and create new supply chains in the semiconductor industry.

📬 Likte du denne?

AI-nyheter for ledere. Kuratert av en CIO som bygger det selv. Daglig i innboksen.