BREAKING: NVIDIA Launches NemoClaw — Enterprise OpenClaw with GPU Acceleration

NVIDIA Confirms: This Is the Future of AI Agent Architecture

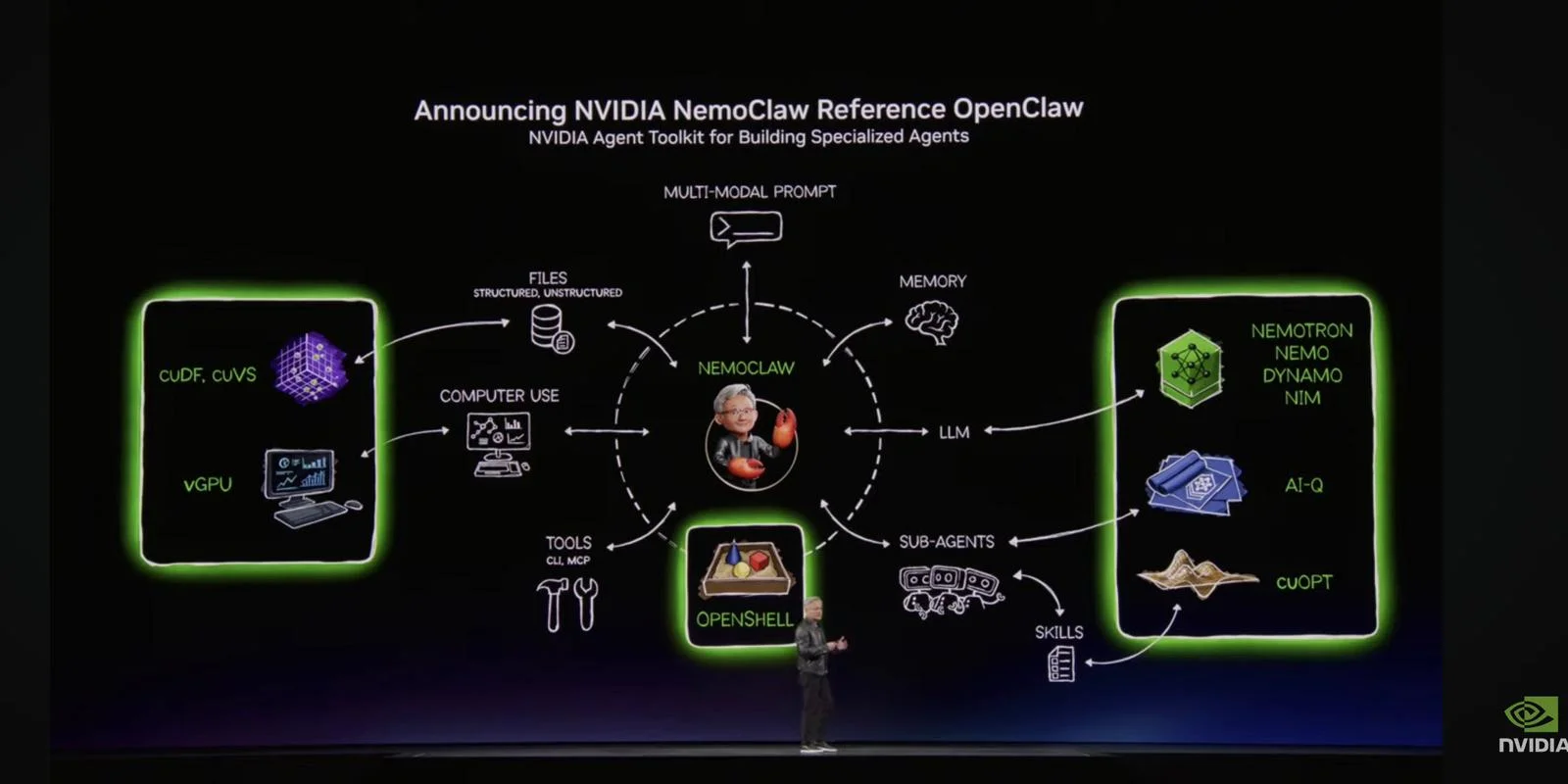

At the GTC 2026 keynote, Jensen Huang unveiled something that stopped everyone familiar with OpenClaw cold: NemoClaw — NVIDIA's reference implementation of an AI Agent Toolkit for Building Specialized Agents.

🎥 Watch the keynote clip

Jensen Huang presents «Agents — A New Computing Platform» at GTC 2026 in San Jose.

It's no coincidence the architecture looks familiar.

What Is NemoClaw?

NemoClaw is NVIDIA's enterprise-ready version of the architecture powering JP Claw — and the OpenClaw platform in general. From the keynote slide:

- NemoClaw at the center — orchestrating the entire system

- Multi-Modal Prompt — AI understanding text, images, and more

- Files (structured + unstructured) + Memory + LLM

- Computer Use — AI directly operating the computer

- vGPU + cuDF/cuVS — GPU-accelerated data processing

- Tools via OpenShell — CLI and MCP protocol

- Sub-Agents — specialized agents working in parallel

- Skills — modular capabilities agents can learn

- Nemotron/NeMo/Dynamo/NIM — NVIDIA's LLM infrastructure

- AI-Q + cuOPT — optimization and scheduling

For Non-Technical Readers:

Think of it as NVIDIA just launching the industrial version of something we built at home.

| Term | What It Means in Practice |

| Sub-agents | Specialized digital employees — one for calendar, one for email, one for code |

| Skills | Modular capabilities agents can learn and combine |

| MCP | Standardized way for AI to use tools — like USB for AI |

| Memory | AI that remembers context over time — not just the last message |

| OpenShell | AI that can use the terminal like an experienced developer |

Why This Is Huge

1. NVIDIA Validates the Architecture

This is not a new concept for us — it's the confirmation we needed. Sub-agents, skills, MCP, memory: everything driving JP Claw today is what NVIDIA is now building its enterprise platform on.

2. Enterprise-Ready at Scale

"Enterprise-ready" means large organizations — banks, hospitals, industrial conglomerates — can now deploy this architecture in production with NVIDIA's backing and guarantees.

3. GPU Acceleration Changes Everything

Where we currently run agent inference on CPU and cloud APIs, NemoClaw will run on dedicated GPUs with cuDF and cuVS. That means dramatically faster agents — seconds become milliseconds.

4. NemoClaw + Vera Rubin = The Future

Combined with the Vera Rubin architecture (NVIDIA's next GPU generation), this sketches the outline of an AI infrastructure we've never seen before.

My Take: We've Been Running This in Production for a Month

I've been following OpenClaw's development closely — and actively using JP Claw in my daily work as a CIO advisor for nearly two months. When I saw the NemoClaw slide, my reaction wasn't surprise.

It was recognition.

The architecture Jensen Huang presented on stage in San Jose is the architecture already orchestrating my meetings, emails, research pipeline, and strategy documents — today, on a Mac mini at home.

NVIDIA isn't confirming we were lucky. They're confirming we chose the right architecture.

For Norwegian CIOs and CEOs, the message is simple: This isn't the future. This is now. Those waiting for "enterprise versions" to arrive will find competitors already have a two-year head start.

The question is no longer whether to deploy AI agents. It's which architecture you choose — and whether you start today or in six months.

Joachim Høgby is a CIO advisor writing about strategic AI adoption for Norwegian businesses.

📬 Likte du denne?

AI-nyheter for ledere. Kuratert av en CIO som bygger det selv. Daglig i innboksen.