NVIDIA releases Nemotron 3 Nano Omni for multimodal AI agents

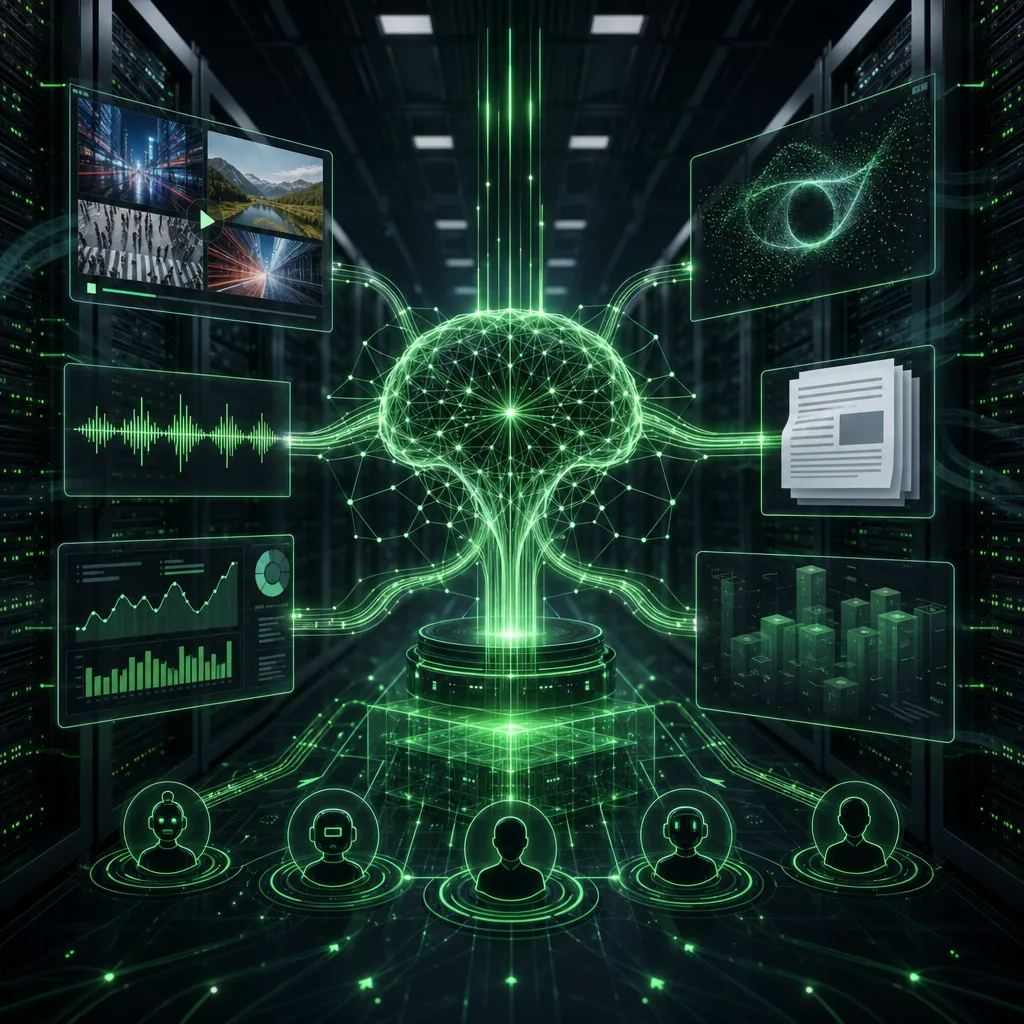

NVIDIA launched Nemotron 3 Nano Omni on April 28, an open multimodal model designed to act as the “eyes and ears” inside agentic systems. It brings text, images, audio, video, documents, charts and graphical interfaces into one perception model, instead of forcing an agent to jump between separate models for each format.

What is new

Nemotron 3 Nano Omni is a 30B-A3B hybrid mixture-of-experts model with a 256K context window. NVIDIA says it is available from April 28 through Hugging Face, OpenRouter, build.nvidia.com and more than 25 partner platforms.

The point is not to replace every large language model. NVIDIA positions it as a multimodal subsystem inside an agent stack, used alongside models such as Nemotron 3 Super and Ultra or proprietary models. It is meant to interpret screen recordings, documents, audio, video and visual interfaces faster and cheaper than a chain of separate models.

NVIDIA claims up to 9x higher throughput than other open omni models with similar interactivity. The company also points to document, video and audio benchmarks, but leaders should treat those as vendor benchmarks until they are tested in their own workflows.

Why it matters

For companies building agents, multimodal input is often the expensive and fragile part. Customer support recordings, PDFs, screenshots, spreadsheets, video and speech need to be understood before the agent can act. If one open model can handle more of that with lower latency and lower cost, production agents become easier to operate.

This is especially relevant for companies that need local control, self-hosting options and document-heavy workflows. A smart top model is not enough. The agent also needs a reliable sensory layer.

Source and date check

The original source is NVIDIA’s own blog post, "NVIDIA Launches Nemotron 3 Nano Omni Model", published on April 28, 2026. NVIDIA also published a technical blog the same day with architecture details, availability and benchmark references. The story is within the 48-hour freshness requirement.

Sources: https://blogs.nvidia.com/blog/nemotron-3-nano-omni-multimodal-ai-agents/ and https://developer.nvidia.com/blog/nvidia-nemotron-3-nano-omni-powers-multimodal-agent-reasoning-in-a-single-efficient-open-model

📬 Likte du denne?

AI-nyheter for ledere. Kuratert av en CIO som bygger det selv. Daglig i innboksen.